![]() This article is part of the Remote Control Warfare series, a collaboration with Remote Control, a project of the Network for Social Change hosted by Oxford Research Group.

This article is part of the Remote Control Warfare series, a collaboration with Remote Control, a project of the Network for Social Change hosted by Oxford Research Group.

This article by Esther Kersley, Katherine Tajer and Alberto Muti originally appeared on openDemocracy on 7 November 2014.

Cyber space is a confusing place. As current discussions highlight the possibility of “major” cyber attacks causing a significant loss of life or large scale destruction, it is becoming harder to determine whether these claims are hype or are in fact justified fears. A new report by VERTIC, commissioned by the Remote Control project, offers some clarity on the subject by assessing the major issues in cyber security today to help better inform the debate and assess what threats and challenges cyber issues really do pose to international peace and security.

How much of a threat are cyber attacks?

Cyber attacks have been identified as one of the greatest threats facing developed nations. Indeed, the US is spending $26 billion over the next five years on cyber operations and building a 6,000 strong cyber force by 2016 and the UK has earmarked £650 million over four year to combat cyber threats. This level of investment suggests that states view issues of cyber security as a question of national security. But how much of a threat do cyber attacks pose to national security and how much damage have they caused?

There is a need for caution when assessing the risk posed to national security by cyber threats. Indeed, although states are heavily investing in cyber security, to date, the majority of cyber incidents that have made the news have not directly impacted a state’s sovereignty, or threatened a state’s survival. For that to happen, an attack would have to significantly affect a government’s ability to control its territory, inflict damage to critical infrastructure or, potentially, cause mass casualties.

Nevertheless, some notable instances of cyber attacks have had a significant impact on international relations over the past decades. These are ‘Stuxnet’, the cyber attack targeting Iranian uranium centrifuges (allegedly launched by a combined US-Israeli operation), the ‘Nashi’ attacks on Estonian government and private sector websites and web-based services, and the many instances of cyber-espionage that form the so-called ‘Cool War’ currently taking place between China and the US. Furthermore, cyber attacks have also been used as instruments of war in conjunction with conventional military operations, for example during the Russo-Georgian conflict in 2008 and most significantly during in the Israeli air raid against a nuclear reactor facility in Syria in 2007.

However, to date no attack has led to large scale destruction or fatality, suggesting that the potential for this is unlikely. This is due to the great amounts of technological expertise, material resources and target intelligence required to carry out such an attack. These resources are currently only in the hands of states, that might hesitate in using cyber attacks in such a way, when other means are available. This could of course change, especially if different political actors acquired the necessary means.

What should we be concerned about?

This is not to say we have nothing to be concerned about. Although a large scale cyber attack that inflicts mass casualties is unlikely to occur in the near future, cyber activities can still affect civilian lives in other ways. The hyperbolic language used to describe the potential consequences of cyber attacks, combined with a lack of reliable, concrete information on the real risks posed by cyber threats has contributed to the ‘securitisation’ of the debate around cyber security issues. It is feared that this process will lead to possible dangers being overestimated, and vulnerabilities cast as national security threats of immediate concern. States’ reactions to these perceived risks may cause negative implications on both citizens and international peace and security.

Already we are seeing a potential consequence of securitisation as governments turn to surveillance as a preventative measure against cyber attacks. In addition, the difficulty of attributing cyber attacks, as well as the widespread fear that other countries will constantly engage in cyber espionage, has led some to claim that the ‘cyber realm’ favours the attacker. This, in turn, may lead states to engage in a ‘cyber arms race’, as well as foster a ‘Cool War’ dynamic of continuous attrition and escalation between states. This erosion of trust between states, as well as the diminishing of civil liberties, are two serious concerns with regards to the militarization of cyber space.

Cyber attacks also pose serious transparency and accountability issues due to the above-mentioned technical complexities of cyber attack attributions, as well as the ambiguous relationship between state and non-state actors (in the ‘Nashi’ attack in Estonia for example, the relation between the youth group responsible for the attack and the Russian government remains an ambiguous one). The lack of legal clarity in this area is also worrying, meaning attackers will often not face consequences for their actions.

The only existing international legislation in the field – the Budapest Convention – solely addresses cybercrime and no further issues (such as military use of cyberspace). The Convention also does not have enough support to provide enforcement of its objectives, has no monitoring regime and has not been signed by Russia or China. Furthermore, an attempt to set out ‘rules’ on the legal implications of cyber war – in The Tallinn Manual – found that the complexities of cyber conflict means there are many instances that do not easily adhere to current legislative standards. The speed of technology evolution further hampers drafting of law and international legislation.

Growth of remote control warfare

The rise in cyber activities cannot be examined in isolation. Its growth is part of a broader trend of warfare increasingly being conducted indirectly, or at a distance. This global trend towards ‘remote control’ warfare has seen an increasing use of drones, special forces, private military and security companies as well as cyber activities and intelligence and surveillance methods by governments in the last decade.

Indeed the global export market for drones is predicted to grow nearly three-fold over the next decade, and a broader range of states are now using drones, including France, Britain, Germany, Italy, Russia, Algeria and Iran. The US has more than doubled the size of its Special Operations Command since 2001, and private military and security companies are playing an increasingly important role in both Afghanistan and Iraq, with over 5, 000 contractors employed in Iraq this year.

The idea of countering threats at a distance, without the use of large military forces, is a relatively attractive proposition as the general public is increasingly hostile to ‘boots on the ground’. However, the concerns highlighted in this latest report with regards to cyber activities are echoed in all ‘remote’ warfare methods as their covert nature means there are serious transparency and accountability vacuums. As well as this, wider negative implications have been identified where these methods are in use, from the detrimental impact of drone strikes in Pakistan to instability caused by special forces and private military companies in Sub-Saharan Africa. The militarisation of cyber space is part of this growing trend and, like these other new methods of warfare, increased transparency and accurate information is essential in order to assess the real impact they are likely to have.

Esther Kersley is the Research and Communications Officer for the Remote Control project of the Network for Social Change. The project, hosted by Oxford Research Group and affiliated with its Sustainable Security programme, examines changes in military engagement, in particular the use of drones, special forces, private military and security companies, cyber warfare and surveillance.

Katherine Tajer is a Research Assistant for the Verification Research, Training and Information Centre (VERTIC).

Alberto Muti is a Research Assistant for the Verification Research, Training and Information Centre (VERTIC).

Featured image: The command line environment in MS-DOS. Source: Flickr. Available under Creative Commons v2.0.

With nearly 870 million people chronically undernourished, and progress towards the Hunger Millennium Development Goal ebbing since 2008, feeding the world will continue to be a major global challenge. The limitations of arable land availability, water accessibility, and humanity’s increasing population trajectory further compound the problem. Addressing the challenges to global food security while ensuring the sustainability of the planet will require changes to the way we interact with agriculture and a clear understanding of the driving factors behind it.

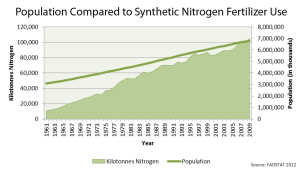

With nearly 870 million people chronically undernourished, and progress towards the Hunger Millennium Development Goal ebbing since 2008, feeding the world will continue to be a major global challenge. The limitations of arable land availability, water accessibility, and humanity’s increasing population trajectory further compound the problem. Addressing the challenges to global food security while ensuring the sustainability of the planet will require changes to the way we interact with agriculture and a clear understanding of the driving factors behind it. The industrialisation of agriculture over the last five decades has contributed to massive gains in productivity, but it has also made food increasingly susceptible to energy supply and price fluctuations. Energy in the form of oil and gas is needed to run industrial farm equipment and to ship food around the world. Fertilizers, the driving factor behind most yield increases, are intimately tied to energy and therefore price volatility. Nitrogen fertilizers are particularly significant and are created through a process that combines natural gas and inert nitrogen from the atmosphere in a high-energy reaction to create ammonia. Fertilizer production is estimated to account for more than 50 per cent of total energy use in commercial agriculture (Woods, et al 2010). While shale gas has had a significant impact on the US natural gas market, globally, energy prices are expected to rise in the long term and become increasingly volatile, as shown by the graph to the right. Fertilizer costs will follow a similar trend, leading to variability in cost and availability. This can be especially difficult for small farmers in developing countries, whose resilience to price fluctuations is low.

The industrialisation of agriculture over the last five decades has contributed to massive gains in productivity, but it has also made food increasingly susceptible to energy supply and price fluctuations. Energy in the form of oil and gas is needed to run industrial farm equipment and to ship food around the world. Fertilizers, the driving factor behind most yield increases, are intimately tied to energy and therefore price volatility. Nitrogen fertilizers are particularly significant and are created through a process that combines natural gas and inert nitrogen from the atmosphere in a high-energy reaction to create ammonia. Fertilizer production is estimated to account for more than 50 per cent of total energy use in commercial agriculture (Woods, et al 2010). While shale gas has had a significant impact on the US natural gas market, globally, energy prices are expected to rise in the long term and become increasingly volatile, as shown by the graph to the right. Fertilizer costs will follow a similar trend, leading to variability in cost and availability. This can be especially difficult for small farmers in developing countries, whose resilience to price fluctuations is low. There is no silver bullet answer to this conundrum. However, the solution will likely be a combination of improving the efficiency of chemical fertilizer use and increasing the productivity and adoption of natural methods. Cross-cutting all of these solutions is the main driver of yields: nitrogen. Phosphorous and potash are also important elements of fertilizer, but nitrogen is the nutrient needed in the largest quantities. Just as a basic knowledge of how CO2 impacts climate change is important for developing solutions to the problem, so is knowledge of nitrogen important for developing solutions to food security.

There is no silver bullet answer to this conundrum. However, the solution will likely be a combination of improving the efficiency of chemical fertilizer use and increasing the productivity and adoption of natural methods. Cross-cutting all of these solutions is the main driver of yields: nitrogen. Phosphorous and potash are also important elements of fertilizer, but nitrogen is the nutrient needed in the largest quantities. Just as a basic knowledge of how CO2 impacts climate change is important for developing solutions to the problem, so is knowledge of nitrogen important for developing solutions to food security.